Ship production R + LLM

workflows that actually work

The bridge between tidymodels and modern LLMs — tool-calling with ellmer, RAG with ragnar, production deployment via Shiny and vetiver, rigorous evaluation with vitals.

Every enrollment includes 1 month of prebuilt VM access with R and every package pre-configured. Zero setup — sign up, start coding. Explore membership for annual VM access across our platform.

Most R users get stuck between

tidymodels and LLMs

You know how to fit a model. You’ve played with ChatGPT. But the bridge between them — tool-calling, RAG, production deployment, evaluation — is where everything breaks.

Python tutorials don’t translate

Every LLM course is in Python. You spend hours translating LangChain only to discover half of it doesn’t have an R equivalent.

Tool-calling feels like black magic

You read about "agents" and "tools" but can’t figure out how to register a tidymodels prediction function as something an LLM can actually call.

RAG tutorials skip the hard parts

They show chunking on a toy example. Then you try it on your PDFs and retrieval quality is 20%. Nobody teaches evaluation.

Deployment is an afterthought

Your LLM app works in RStudio. Behind a Shiny app that doesn’t leak tokens or crash? That’s where projects die.

R-native from end to end

ellmer, ragnar, querychat, mall, vitals, vetiver. The modern R AI stack taught by the people who use it in production.

Tool-calling that calls your real models

Register a tidymodels workflow as a tool. NL interfaces to ML pipelines. Users ask questions, get predictions, never leave R.

Production RAG with proper eval

Hybrid retrieval (BM25 + embeddings). Chunking strategies that work. RAG evaluation with vitals so you know when your system is good.

Full Shiny + vetiver deployment

Package models with vetiver, wrap in Shiny, monitor cost and latency, add observability. Your app in production, not your laptop.

Watch the modern R AI stack

write itself

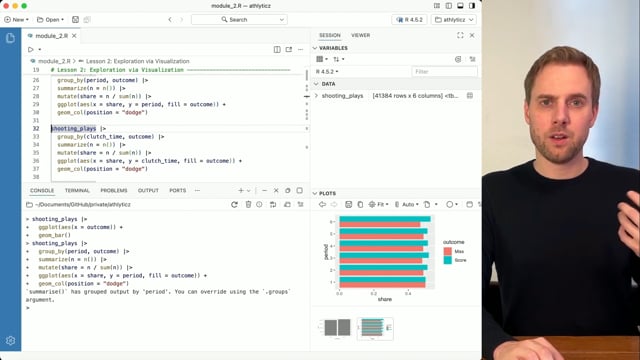

Most courses teach packages in isolation. Here you learn how ellmer, ragnar, tidymodels, and Shiny compose into real systems.

The terminal on the right is typing real R code, live. Three examples cycle automatically — or click any tab to jump to it.

See your RAG pipeline come alive

Watch documents flow through chunking, embedding, hybrid retrieval, and context augmentation — into an LLM that answers with sources. This is Module 19 in one diagram.

Six portfolio-worthy systems

Not toy examples. Complete, deployable R applications that demonstrate production-grade ML + LLM integration. The kind recruiters actually want to see.

Full tidymodels ML pipeline

Recipes, workflow sets, regularized regression, random forests, neural nets, cross-validation, hyperparameter tuning.

NL → SQL database analyst

Natural language interface to a relational database. Tool-calling, safe query execution, structured output extraction.

Document Q&A system

Hybrid retrieval over multiple documents. BM25 plus embeddings, chunking strategies, retrieval tool registration.

LLM evaluation harness

Eval datasets, statistical model comparison, RAG-specific evaluation design. Know when your system is actually good.

Deployed ML model API

Package a tidymodels workflow with vetiver, version it, deploy as an API, monitor drift in production.

Production Shiny chatbot

Full LLM chatbot in Shiny. Tool-calling, RAG, prediction explanation, cost/latency monitoring, proper UX.

Built by R’s most respected

LLM practitioners

Two instructors. One deep in package development and production R. One at the intersection of modeling, deployment, and modern AI workflows. Both writing R in production every day.

Apache Arrow Core Maintainer

Independent R consultant with 15 years in R and 10+ years shipping R in production, plus a PhD in Statistics. Core maintainer of Apache Arrow for R and author of Scaling Up with R and Arrow (CRC Press, 2025). Deep experience across pharma, public health, academia, and startups.

Co-Creator of Tidy Finance & EconDataverse

Financial economist turned data scientist and product manager. Builds interactive dashboards, automated reporting, and custom R/Python packages. Extensive experience with CI/CD and Shiny deployments — Docker, ShinyProxy, Posit Connect, GCR.

21 modules. 110+ lessons.

One complete path.

From tidymodels foundations through production LLM apps. Click any module to see every lesson.

Build a rigorous ML foundation using tidymodels — from exploratory analysis through deployment. Sports data throughout. Real workflows, not toy examples.

You don’t need a separate ML course after this

Most LLM courses handwave the ML side. Here, you get the full tidymodels treatment — preprocessing with recipes, tuning with workflow_sets, deployment with vetiver. By the time you hit Phase II, you’ve built production ML pipelines. The LLM layer sits on top of real modeling foundations.

Build the core LLM skill stack in R using ellmer. Prompts, conversations, structured data extraction, multimodal input, and model selection including local models via Ollama.

Prompt engineering isn’t magic — it’s structured thinking

You’ll go from basic chat to few-shot prompting, structured output that returns clean data frames, and multimodal workflows that extract tables from images. This is the skill stack that turns LLMs into actual R tools instead of toys.

The core differentiator of this course. Register R functions as tools. Build RAG systems with proper retrieval evaluation. Run NLP pipelines with mall. Measure everything with vitals.

Build a working RAG pipeline and learn how to evaluate it

Eight lessons on RAG covering BM25, embeddings, hybrid retrieval, tool registration, and context augmentation. Then eight lessons introducing evaluation with vitals, including RAG-specific eval design. By the end, you’ll have a working RAG system and a principled starting framework for evaluating it — with clear pointers to the deeper evaluation literature so you can go further when you need to.

Put it all together. Build complete LLM applications with Shiny, querychat for NL-to-SQL, and shinychat for production chatbots. Tool-calling, RAG, model explanation — all wired into a real deployable app.

You leave with a deployable app — not a notebook

By Module 21, you’re wiring everything together. Tool-calling inside Shiny. RAG inside chatbots. NL-to-SQL with querychat. Model explanation in-app. This is the portfolio project recruiters actually want to see — not another Kaggle notebook.

Two ways to get in

Final extension. Presale pricing closes midnight May 15, 2026 ET. Same course, later date, higher price.

- 21 modules, 110+ lessons

- Full tidymodels + LLM R stack

- Tool-calling, RAG, evaluation, deployment

- Production Shiny chatbot capstone

- Dr. Nic Crane + Dr. Christoph Scheuch

- Certificate of completion

- All presale content included

- Launch access at course release

- Same 1-month VM access

- Same lifetime access

This is the final extension.

AthlyticZ Members enroll at $639 · Explore Membership →

Quick answers

Build the R + LLM workflows

the rest of the field is still Googling

21 modules. 110+ lessons. Full tidymodels + modern LLM stack. Six portfolio systems. 1 month of prebuilt VM access included.